I came to the idea of publishing this software part I have been working on during my Master’s Thesis. During my time at SIMTech, I was working on robotics, 3D reconstruction of workpieces, and path teaching using Augmented Reality (AR).

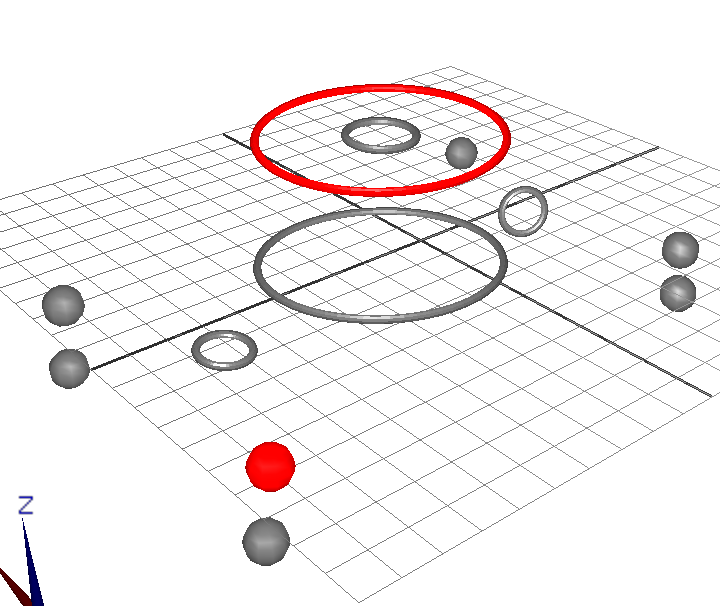

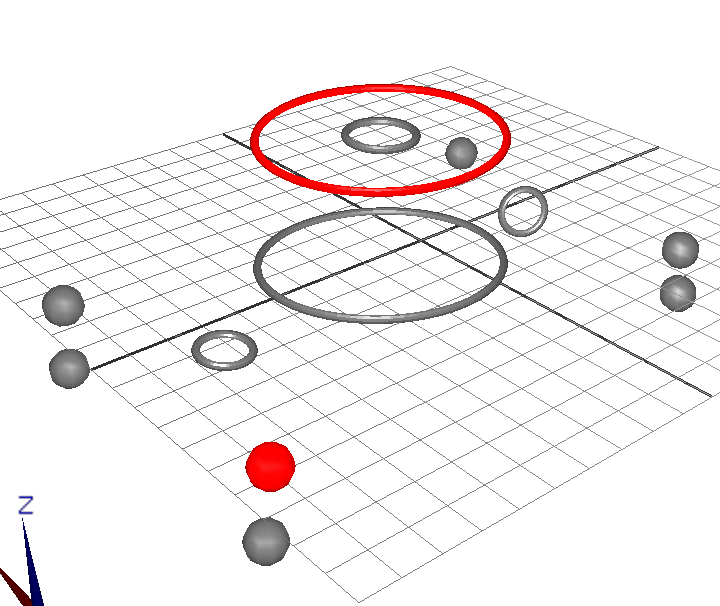

A part of our project was the task of reconstructing a 3D circle based on its (elliptical) projection on several camera images. Assume you have several 2D ellipses representing the projection of this 3D circle taken from different positions, you want to find the 3D circle that matches the projections as good as possible.

Applications may be traffic sign pose estimation, e.g. estimate the position and orientation of a traffic sign relative to the car based on the vehicle camera data.

My situation was slightly different: I had a cylindric workpiece that I wanted to perform a round 360-degree robotic weld upon. To achieve that, we took pictures of the workpiece from different orientations using a camera atteched to the end-effector. These pictures, the robot pose and the pre-calibrated camera parameters were then used to reconstruct the workpiece in 3D space.

Several papers are available for this problem, but some of them either require additional knowledge, such as the circle’s base plane, or are very scientific, and are too complex for a simple engineer to understand them. In the end, I used the method presented in the paper referenced below:

Soheilian, B.; Brédif, M., “Multi-view 3D Circular Target Reconstruction with Uncertainty Analysis”, ISPRS Annals of the Photogrammetry, Remote Sensing and Spatial Information Sciences, Vol. 2, Issue 3, pp 143-148, 2014

In my opinion, it is a very good paper and their approach is very advanced, but due to the amount of information in this paper it can be a bit difficult to understand for usual engineers or programmers. That was the reason why I decided to publish my implementation of it and try to explain the approach proposed by Soheilian and Brédif a bit more in detail. The major difference between my implementation and the one presented by Soheilian and Brédif is that their approach also optimizes the extrinsic camera parameters and the 2D ellipse data to provide the best results, while my simplified method assumes that the camera’s parameters (extrinsic and intrinsic) are known and perfect.

The projection of a 3D circle on a camera image is an ellipse, so these projections pose the base for the reconstruction approach. In this document, I will not focus on how to detect ellipses on a camera image or how to find the best fitting ellipse, as this part of the algorithm is rather simple. From my experience, a simple Canny edge detector with subsequent contour detection, followed by an ellipse detection and filtering usually works quite good. To fit an elipse into a detected contour on a camera image, I used an improved version of the basic Fitzgibbon et al. algorithm to make it numerically stable. This commonly used algorithm is also described here:

Halír, R; Flusser, J., “Numerically Stable Direct Least Squares Fitting Of Ellipses”, Institute of Information Theory and Automation, Prague, 1998.

In the subsequent paragraphs, I assume that you have already detected and found the best fitting ellipses that belong to the same 3D circle on different images.

What Do I need to know?

In the following text, I assume that you are already familiar with the following mathematical things. If you’re not, you may consider having a short look at the internet to understand it or you keep reading. Some things are not as complicated as they look like at first glance- after all, its no rocket science!

- Camera pinhole projection model. I assume that you know what the intrinsic and extrinsic parameters are and how their matrices look like.

- Matrix operations, multiplications, inverse, etc.

- 2D ellipse representation. You need to know how to express an ellipse as the solution of a conic equation.

- Quadrics and their duals (you don’t need to have a good understanding of it. I don’t have it either. It is enough to know what a quadric is and to know that a quadric has a dual).

- Eigenvectors and Eigenvalues.

So lets get started!

Algorithm Implementation Details

Unprojecting a circle from several orientations is a highly non-trivial task and requires a skillful approach to mathematically express the relationship between the projections and the original 3D circle, using the lowest number of unknowns possible, while keeping an unconstrained approach. For this document, a simplified version of the algorithm presented in this research paper published by Soheilian and Brédif has been used.

This algorithm encodes all information of the circle in six unknowns, the circle center C and the circle’s normal vector N.

With N and C, the orientation and position of the plane supporting the circle is fully described. The radius of the circle is encoded in the normal vector by defining the normal vector N length to be equal to the radius R. The proposed parameterization is the minimal parameterization of 3D circles and is both unambiguous and unconstrained, so no additional conditions are required to be set up.

The idea of the algorithm is to describe the ellipse and the 3D circle as quadrics. To simplify the calculation effort, both quadrics are transformed to their dual quadric. Taking the conic equation of a 2D ellipse, the quadric for an ellipse can be calculated:

This can be rewritten as follows:

E is a 3×3 symmetric matrix and represents the ellipse as point quadric. With this point-based conic, the dual conic E* of this quadric based on all tangent lines of E can be calculated.

As a conic equation can be scaled without changing its validity, the determinant can be ignored and the adjugate matrix of E can be used to describe the dual conic of E. By additionally adding the ellipse constraint of

and scaling the conic equation in such a way that

is fulfilled, the dual quadric E* can be calculated based on the ellipse parameters as follows:

To describe a circle in 3D space, Soheilian and Brédif propose to start with a quadric representation of a sphere and flatten it over time. Their approach starts with the equation of an origin-centered sphere. Rewriting the sphere equation as a quadric yields:

In the next step, the sphere

is flattened over time by scaling the z coordinate. At t = 0, the quadric represents the same sphere. For

we get a disc of radius r at z = 0. The dual quadric of this 3D circle is gained as follows:

Using homogeneous coordinates, this circle can be translated and rotated using a transformation matrix

containing the 3×3 rotation matrix

and translation vector C. As defined previously, the radius information of the 3D circle is encoded in the length of the normal vector, thus we can write:

Transforming the dual quadric results in a quadric that fully describes a circle in 3D space. In the following formulas, vectors are now treated as a matrix with a single column to omit the vector arrow.

Further simplification yield:

With I3 being a 3×3 identity matrix. By using the notation for the cross product

Q*(C,N) can be rewritten as follows, where

denotes the matrix encoding the cross product

:

Ann ellipse is the projection of a 3D circle using a projection matrix P. Similarly, a dual quadric Q* is imaged as a dual conic E*:

The projection matrix P is the product of the camera’s intrinsic and extrinsic parameters and can be decomposed as

with K being the matrix of intrinsic parameters, R being the 3×3 rotation matrix of the extrinsic parameters and S being the original translation vector. If a 4×4 matrix of extrinsic parameters containing both the rotation and translation is given, S can be calculated by decomposing the transformation matrix into a rotation and translation matrix:

Now, S can be easily calculated by reversing the rotation of T:

With both the extrinsic and intrinsic parameters known, the relationship between the 3D circle and the imaged ellipse can be solved as follows by defining M = KR:

To calculate the row and column values of the dual conic, above equation can be rewritten for each matrix element if the matrix M is row-wise splitted:

i, j represent the row and column of the matrix, respectively. The dot represents the dot product while the x represents the cross product.

To compare the calculated projection of this circle to the estimated ellipse parameters Eobs* gained from the image contours, Eobs* must, up to a scalar factor, verify E*. As a conic equation can be scaled without affecting the described ellipse, an additional unknown homogeneous scale has to be calculated. Above equation yields six equations, out of which one has to be used to calculate the unknown scale factor, resulting in five equations. As the used model of the 3D circle has six unknown parameters, this means that at least two ellipsoid projections of the same circle must be known to solve this set of equations.

By scaling the parameters of the observed ellipse so that Eobs*33 = 1, the cost vector for the least-squares optimization can be written as follows:

vec() represents a vector, containing the five different elements of the matrix E* or Eobs*, respectively. A possible arrangement can be:

For each ellipse, five equations can be set up. The system of equations can be solved iteratively using the Gauß-Newton algorithm. Given N projections of the circle, the Jacobi matrix is a 5*Nx6 matrix. Introducing the 3×3 matrices

F features the following derivatives:

Keeping the order used for vec() defined above; the first five rows of the Jacobi matrix can be set up as follows. Each image of the 3D circle represents five rows in the Jacobi matrix.

The solution vector

can then be calculated in several iteration steps using the well-known Gauß-Newton algorithm. In the end, the algorithms returns the orientation and radius of the 3D circle, if a set of ellipses and the corresponding camera parameters (extrinsic and intrinsic) are given.

At the moment, the implementation of the algorithm assumes that the intrinsic parameters of the camera do not change; however, simple adaptions to the algorithm can be made to make it suitable for stereo cameras.

The algorithm was successfully tested using a MATLAB throw-away implementation before it was integrated into the C++ software project, where it was successfully used for robotic welding.

You can download the MATLAB script for this algorithm on my GitHub page.